The YouTube algorithm knows me pretty well by now, so decided I’d be interested in this video during a recent Zwift training ride:

Testing the accuracy of VO₂max on smartwatches? Sounds like the perfect blend of nerdiness and fitness tech. Sign me up, I thought. But I was soon left a little enraged. So lets talk about why.

Why compare VO₂max?

This isn’t the first of these. Usually it’s the same story: a fitness influencer spends hundreds on a clinical VO₂max test, gets their “true” score, and then triumphantly ranks their smartwatches from “wildly inaccurate” to “shockingly close.”

These “n=1” experiments clearly make for monitisable entertainment, but from a scientific standpoint, they are profoundly misleading. They test a single data point against a complex algorithm built on thousands.

The real question isn’t whether your watch is accurate for you. The question is, how do we know if an algorithm is “accurate” at all? The answer lies in moving from a single anecdote to a proper statistical analysis.

Accuracy vs Precision vs Calibration

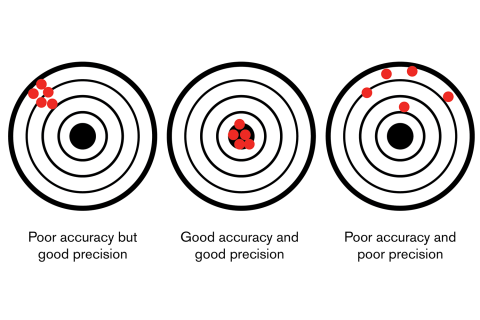

The first point is one of terminology. There are two related terms, which most people use interchangable:

- Accuracy: How close is the measurement to the true, “gold standard” value? (e.g. the lab said 57, the watch said 56. That’s highly accurate).

- Precision (or Repeatability): How consistent are the measurements? (e.g. if your fitness doesn’t change, does the watch give you a 53 every single time? That’s high precision, even if your true score is 57).

An “n=1” test tells us simply the accuracy for one person, at one moment in time. It tells us nothing about precision, long run accuracy, and more importantly, about our final important term: Calibration, or how the algorithm performs across the entire population of people using it.

How to actually test these smartwatches

We now need to consider how these watches (and other “smart” devices) estimate VO2max.

Whereas we’d calculate VO2max on a CPEX test by measuring inspired oxygen and expired carbon dioxide, the watches use algorithms based on the relationship betwen heart rate and pace or power, adjusted for age, gender, weight, etc. These are essentially derived from a large number of users where their lab VO2max is known, and a regression performed to use these variables to predict it.

Garmin have published a white paper explaining how their algorithm does it, and the other manufacturers will be very similar.

So if you wanted to do a replication study right, you’d have to move from the influencer’s backlit desk to a proper research lab, and do this multiple times.

- You can’t use one person. You need a large, diverse sample of participants from different ages, genders, and, most importantly, a wide spectrum of fitness levels. Essentially anyone who might use the watch, you need to include them.

- Establish the “Gold Standard”: Every single participant would get the same “gold standard” lab test to find their true VO2max. This is our unassailable “truth” value.

- Control the Variables: You would ensure every participant’s exact lab-measured weight, height, and age is entered into every device. You’d also need to ensure they do the right sort of runs that lead to accurate VO2max estimations. This removes the “garbage in, garbage out” problem that plagues personal use.

Statistics time

Only then, when you have a spreadsheet with hundreds of “True” values next to hundreds of “Estimated” values, can the real statistical work begin. There’s a number of ways we can assess “accuracy” (and precision, and calibration).

Mean Absolute Error (MAE) is the most basic measure of accuracy. It is simply “on average, how far off is the watch?” You take the difference between the watch and the lab for every person, ignore the plus/minus sign, and average the results. An MAE of 1.5 would mean that, for the average user, the watch is only 1.5 ml/kg/min away from the true lab score. For some individuals they may be higher, for some they might be lower, but on average it’s good. This is far more powerful than a single anecdote, especially when that single person might be to one extreme of the population used to derive the algorithm.

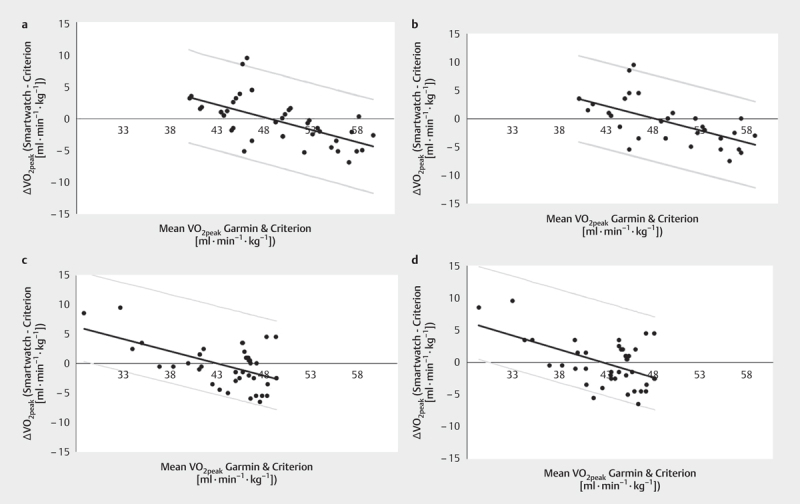

But the number by itself is of limited value. If I had to chose one representation, even if it doesn’t make for sexy youtube viewing, I’d say the Bland-Altman Plot is the single most important tool for a study like this. This is where we see if the algorithm is “fair.” A Bland-Altman plot doesn’t just look at the error; it looks to see if the error has a pattern by plotting the difference between the watch and the lab (the error) against the average of the two (the participant’s general fitness).

A good algorithm will result in points randomly scattered around the “0” line. This means the watch’s errors are random, which is good! It’s not perfect, but it’s not biased. Meanwhile, if the algorithm was biased, the points will form a clear, sloped line.

I’m not the first to make this suggestion. This study assessed just that for the Venu SQ, and this one for the Forerunner 245, both of which use (different generations) of the Garmin/Firstbeat VO2max algorithms.

Both studies demonstrated the same systematic bias - although the algorithm was fairly good, it tending to underestimate VO2max in the fittest individuals, and overestimate this for the least fit. This is a critical finding that an “n=1” test from a fit YouTuber would never uncover.

Linked to this, it would be useful to demonstrate calibration plots for this - over what range, and for whom, is the VO2max estimation useful, and when does it fall apart.

So ultimately, should you trust your watch?

Your watch’s VO2max number is probably not “accurate.” But without a lab test, you have no way of knowing either way. Does it matter? Many argue that VO2max comparisions are essentially willy measuring contests for endurance athletes, and results are what matter. Nobody other than my vanity cares what my Garmin claims my VO2max is, but my teammates do care when I get dropped on a climb.

The real value of your watch isn’t accuracy - its precision. Although you shouldn’t care about the absolute number, but you should care about the trend. If your watch gave you a 53 in January and, after three months of hard training, it now gives you a 56, that is valuable. The algorithm is precisely (if not accurately) detecting a positive change in your fitness. That’s what you should be tracking.

So lets not be quick to judge a complex algorithm by a single anecdote. The old addage of “more evidence needed” applies to these influencer vidoes before conclusions can be made about how good these devices are at measuring trends, but my own experience suggests that VO2max improvements are correlated with performance improvements (although other metrics of fitness may be more important, depending on your goal).

Ultimately, whichever device you chose, the trend is what matter - and your progress towards more tangiable and directly measurable goals.